When Can AI Be Used in Research Administration?

When Can AI Be Used in Research Administration?

It Comes Down to One Question: Does It Matter How the Work Got Done?

By Nate Layman

AI tools are remarkably capable, and they are getting better fast. They can digest hundreds of pages of sponsor guidelines in seconds, draft polished narrative text from rough notes, and surface patterns in financial data that would take a human analyst hours to find. These capabilities introduce encouraging opportunities for Research Administrators across many professional contexts.

Anyone who has been tasked to manually cross-reference budget line items against a 90-page funding opportunity announcement can immediately see the appeal. AI can take tedious, time-consuming work off people’s plates, and it can do so at both scale and speed. For research administration offices that are facing increasing regulatory complexity with limited staffing, that kind of capability is a lifeline on a stormy day. The potential in AI is worth taking seriously, and this post is not an argument against it.

But precisely because these tools are so capable, it’s crucial that we are deliberate about how we deploy them. The enthusiasm around AI often outpaces conversation about where it belongs in our workflows, and just as importantly, where it doesn’t. Not every task in research administration is the same, and the consequences of overlooking their distinctions can be serious.

In 1979, an internal IBM training slide offered a warning: “A computer can never be held accountable, therefore a computer must never make a management decision.” AI is vastly more capable than the mainframes IBM had in mind, but still, nearly fifty years later, the core insight still holds. Accountability is a human quality. When a task requires someone to stand behind a judgment, to be answerable for the outcome, a computer (no matter how powerful) cannot fill that role.

That principle can be simplified to a single question: does it matter how the task was completed? The answer isn’t always yes or no; instead, it falls on a continuum.

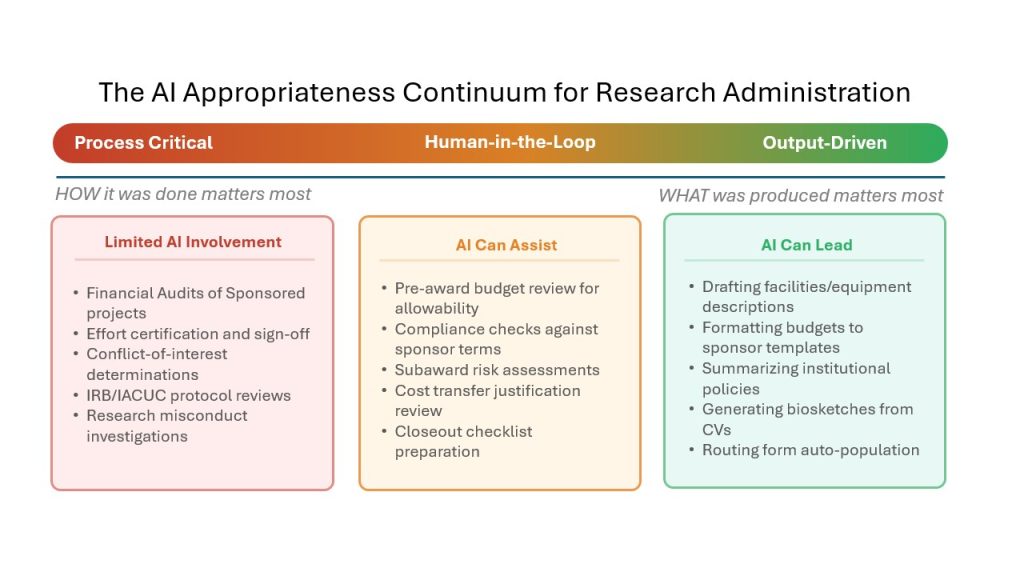

A Continuum, Not a Switch

At one end of the continuum, the integrity of the work is inseparable from the process. Its value depends not just on what was produced but also on how it was produced and who was responsible at each stage. In such cases, every step in the workflow must be auditable.

At the other end of the stage, only the output matters. Nobody asks how the final produce was created, whether it’s how formatting got done or who drafted the first version of a boilerplate paragraph or outline. Most research administration tasks fall somewhere between these two extremes.

One End: When the Process Is the Point

Some tasks exist specifically so that a qualified person can attest that they reviewed something and found it acceptable. Consider a few concrete examples:

When a department conducts a financial audit of a sponsored project, the auditor certifies not just that the numbers add up, but that they personally examined expenditures, verified allowability, and exercised professional judgment. If an AI flags every transaction as compliant, it isn’t performing an audit, but rather a scan. Similarly, a effort certification requires a principal investigator to personally confirm that the reported effort reasonably reflects how their time was actually spent. An AI cannot know that, and more importantly, cannot be held responsible if the certification turns out to be false. The same applies to IRB and IACUC protocol reviews, conflict-of-interest determinations, and research misconduct investigations. Each of these tasks derives its merit from the fact that a qualified professional stood behind the conclusion.

Powerful black boxes are still black boxes. No matter how sophisticated the model, when we use a black box, we cannot fully explain how it produces its outputs. And there is a second, equally important problem: reproducibility, where a colleague can take the same inputs, follow the same steps, and arrive at the same output every time. AI models are inherently stochastic. Ask the same question twice and you may get two different answers. In contexts where accountability depends on a transparent, human-driven process whose results can be independently verified, the combination of opacity and inconsistency that AI introduces is often disqualifying.

The Case for Auditable Tools

This doesn’t mean that technology has no role at the process-critical end of the continuum. It means we need to reach for the right kind of technology: tools whose steps can be written down, reviewed, and repeated exactly.

Data scientists have worked under this constraint for years. When an analysis needs to be defensible, they don’t reach for a tool that produces answers they can’t explain. They use functional programming in languages like R, Python, SQL, or even Excel where every transformation is an explicit, readable step in a script or file. Explicit and exact reproducibility matters most when the output needs to be exact, such as a dollar figure in an expenditure report. When the output is more qualitative (e.g., a drafted paragraph or a summarized policy), exact reproducibility is less important than obtaining a correct and complete result, which is part of what makes those tasks better suited for AI.

Research administration can apply the same principle. An AI model might give you a correct answer, but in cases where “because the model said so” proves an insufficient justification, RAs need to employ auditable tools, where the processes are reproducible and laid out in plain view. Only auditable tools can enable a comprehensive justification of the work.

The Other End: When Only the Output Matters

At the other end of the continuum are tasks where the process is essentially invisible to the recipient. Drafting a facilities and equipment description for a grant proposal is a good example: the content is largely institutional boilerplate, and no reviewer will ask whether a human or an AI assembled it. Reformatting a budget justification to meet a sponsor’s template, generating a biosketch from a CV, summarizing institutional policies for an internal routing form, or auto-populating fields on a proposal routing sheet: these are all tasks where the only measure of success is whether the final product is accurate and complete.

That said, “let AI lead” does not mean “let AI go unchecked.” Accuracy still matters, and it needs to be measured. AI tools can hallucinate facts, misread documents, or quietly introduce errors that look plausible at a glance. Before relying on AI for any output-driven task, teams should validate its performance: spot-check a sample of outputs, track error rates over time, and establish a threshold below which human review gets added back in. Trust in these tools should be earned through evidence, not assumed.

This is where the power of AI really shines. Research administrators are often stretched thin, juggling dozens of proposals and compliance deadlines simultaneously. Letting AI handle this kind of menial work at scale and speed frees up human attention for the more complex tasks further along the continuum that truly demand it.

The Middle: Human-in-the-Loop

Between the two extremes ends lies a wide range of tasks where AI can contribute meaningfully, so long as a qualified person reviews and signs off on the output. This human-in-the-loop model may be where AI delivers the most practical value.

As an example, consider a pre-award budget review. An AI agent could scan a proposed budget against a sponsor’s cost principles, flag items that appear unallowable, and organize its findings into a summary. But a grants specialist would still need to review that summary, considering context the AI may lack (such as a prior agreement with the sponsor about a specific line item), and make the final determination. Similarly, consider subaward risk assessments: an agent could pull together audit findings, SAM.gov status, and prior performance data for a subrecipient, but a human would still need to decide whether the risk level warrants additional monitoring terms. Compliance checks against sponsor terms and cost transfer justification reviews follow the same pattern.

The key distinction is accountability. If a person reviews AI-generated analysis, validates it, and puts their name behind the conclusion, the process retains its integrity. The stochastic nature of AI matters less here because the human reviewer is the one making the final judgment, not the model. The “how” still matters, but a human review and sign-off is what satisfies that requirement, rather than the condition that every preliminary step be completed manually.

Applying the Test in Practice

Before integrating any tool into a research administration workflow, I’d encourage teams to ask two questions: “If someone asked us how this task was completed, would the answer matter?” and “Could a colleague re-run it and get the same result?” For some tasks, exact reproducibility is non-negotiable. For others, a similar result may be perfectly acceptable: two AI-drafted facilities descriptions won’t be identical, word-for-word, but if both are accurate and complete, the difference might not matter. How a task relates to these questions can help determine where it belongs on the continuum.

Process Critical |

Human-in-the-Loop |

Output-Driven |

|---|---|---|

|

Use auditable, reproducible tools: scripts, queries, spreadsheets with visible logic. Every step is documented and repeatable. |

Let AI do the prep work. A qualified person reviews, validates, and signs off on the output. |

Let AI lead, but measure accuracy. Spot-check outputs, track error rates, and add review back in if quality slips. |

Moving Forward Thoughtfully

IBM’s 1979 warning was about mainframes, not large language models. But the principle it captures is timeless: accountability cannot be automated. The question isn’t whether AI has a place in research administration. It clearly does, and the potential it offers is real. The question is whether we’re thoughtful enough to place it at the right point on the continuum for each task we do, and to reach for transparent, reproducible alternatives when the process matters as much as the result.

Ultimately, research administration exists to uphold the integrity of the research enterprise. As we bring smarter and more powerful tools into our work, we must make sure that integrity stays at the center of what we do. This way, our community will only strengthen alongside our tools, developing together into the future.