Digitizing the Last Mile: OCR in Research Administration

By Luke Sheneman

Download the full paper

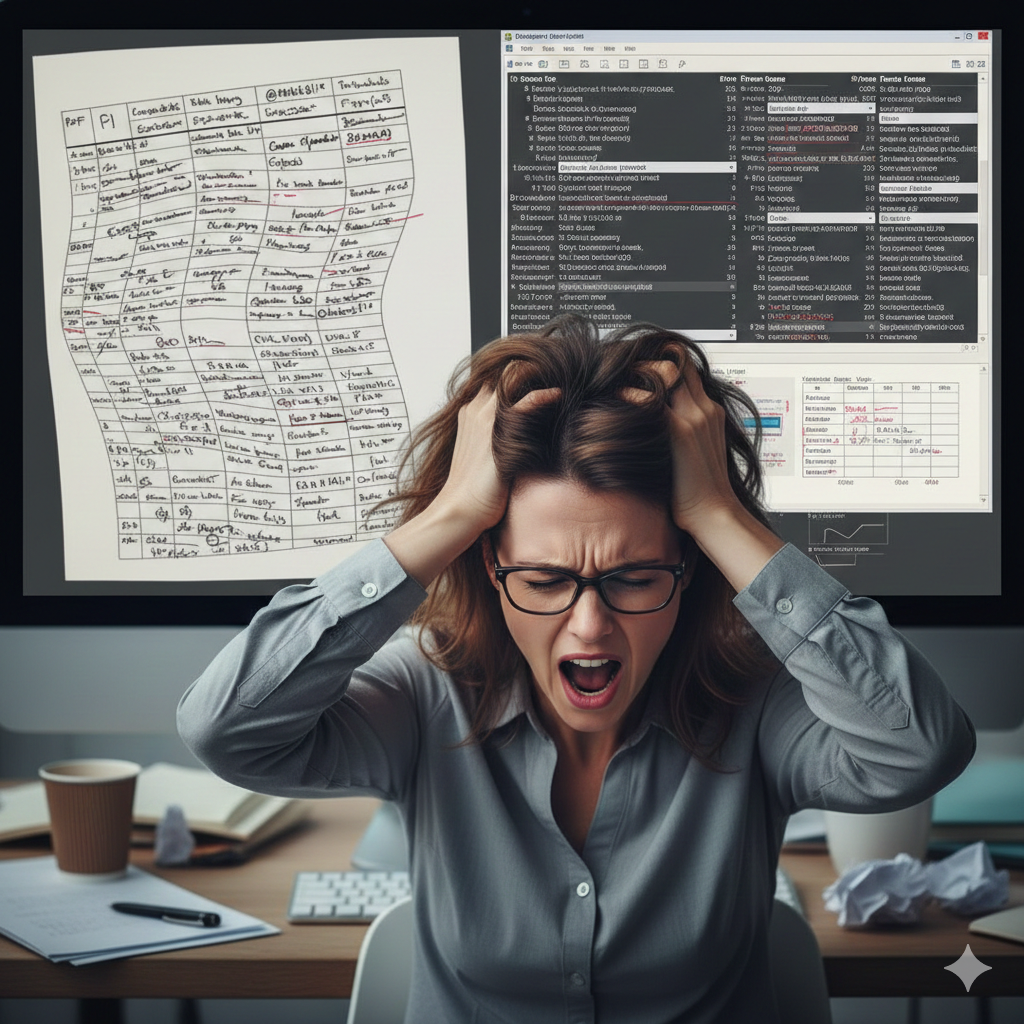

1. The Silent Crisis of Data Entry in Sponsored Programs

University research departments receive billions of dollars in federal grants each year, but managing those grants is surprisingly old-fashioned. When a university gets a grant award, the official notice arrives as a PDF, and staff have to manually copy all the important details — like budget amounts and dates — into the university’s computer systems by hand. This “swivel-chair” process, where someone switches back and forth between a document on one screen and a data-entry form on another, is slow and easily leads to mistakes. Even a small typo in a dollar amount or grant number can trigger serious compliance problems or require repayment of funds. As federal regulations grow more complex, relying on humans to read and retype thousands of pages of grant documents is simply no longer sustainable.

2. The “PDF Trap” and the Failure of Data Standardization

The federal government has tried for decades to make grant data more standardized and machine-readable, but these efforts haven’t fully succeeded. While universities submit grant applications through digital portals, the award notifications they receive back are still often unstructured PDF documents — not organized, computer-friendly data. These PDFs come in three problematic varieties: “born digital” files whose layout gets scrambled when copied, scanned paper documents that are really just pictures, and hybrid files that mix both types. Because each federal agency formats its award documents differently, there is no reliable way for computers to automatically read and understand them all. This creates an urgent need for smarter technology — specifically, advanced Optical Character Recognition (OCR) — to bridge the gap.

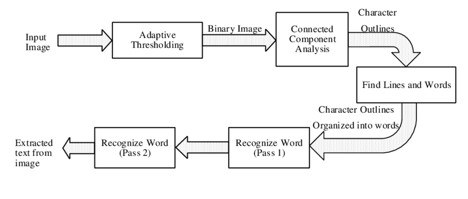

3. The Evolution of Optical Character Recognition

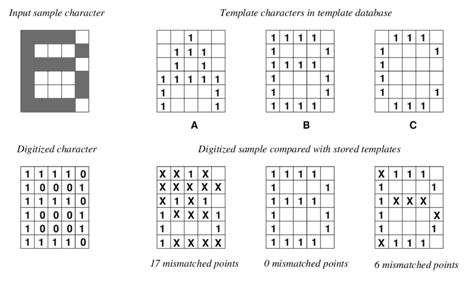

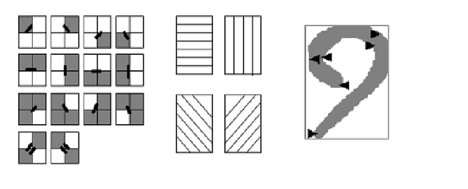

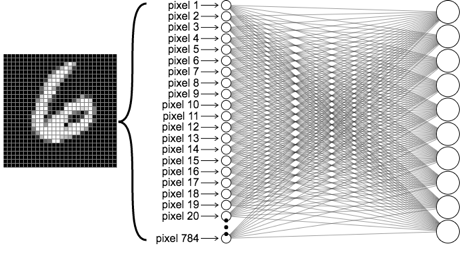

The technology capable of unlocking the data trapped in federal grant PDFs has a rich history, evolving from rudimentary pattern matching to the vision-language models of the artificial intelligence era. Understanding this trajectory is helpful for Research Administrators to evaluate current tools; knowing why a legacy system fails on a complex budget table requires understanding the architectural limitations of its era.

4. The Technical Anatomy of Grant Documentation

The technology capable of unlocking the data trapped in federal grant PDFs has a rich history, evolving from rudimentary pattern matching to the vision-language models of the artificial intelligence era. Understanding this trajectory is helpful for Research Administrators to evaluate current tools; knowing why a legacy system fails on a complex budget table requires understanding the architectural limitations of its era.

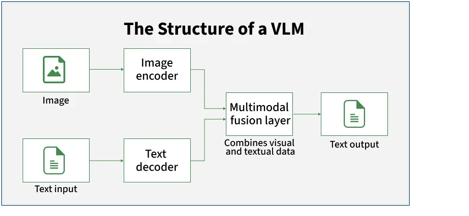

5. Generative AI Approaches to Document Understanding

Artificial intelligence has transformed the way computers can read and understand documents, but two very different approaches have emerged. The older “RAG” approach first extracts text with OCR and then feeds that text to an AI to answer questions — but if the OCR step garbles a table, the AI cannot fix it. The newer “Native Vision” approach skips the separate OCR step entirely: the AI model looks directly at the document image and understands both the text and the layout in one pass. This is far more powerful, especially for complex tables and forms where meaning depends on where things appear on the page. However, because these AI models predict answers rather than simply reading them, they can sometimes produce plausible-sounding but incorrect numbers — a serious risk in financial contexts, which is why human review of the AI’s output remains essential.

6. Review of OCR Solutions for Research Administrators

There are many OCR tools available today, ranging from free open-source software to expensive enterprise cloud services. Free tools like Google’s Tesseract are useful for simple text but fail badly on the complex layouts found in grant award documents. Cloud services from Amazon, Google, and Microsoft are more capable and easier to use, but they require sending sensitive grant data to outside servers — raising privacy and security concerns, especially for grants with federal data restrictions. Specialized platforms like ABBYY and Rossum can be highly accurate but are expensive, rigid, and difficult to update when document formats change. For universities that need both power and data privacy, the newest Vision-Language Models represent the most promising direction.

7. Why dots.OCR is the Preferred OCR Choice for Research Administrators in 2026

After evaluating all available options, dots.OCR stands out as the best choice for university research offices in 2026 for four key reasons. First, it uses a single AI model that reads the entire page at once, understanding tables the way a human would rather than reading awkwardly line by line. Second, it achieves the highest accuracy scores on complex tables — outperforming even Google’s most advanced models on a standard industry benchmark. Third, it is compact enough to run on standard university hardware without ever sending data to an outside cloud service, keeping sensitive grant information secure on campus. Fourth, it can be guided with plain-language instructions to adapt to different document formats, so it does not require expensive technical reconfiguration every time an agency updates its paperwork.

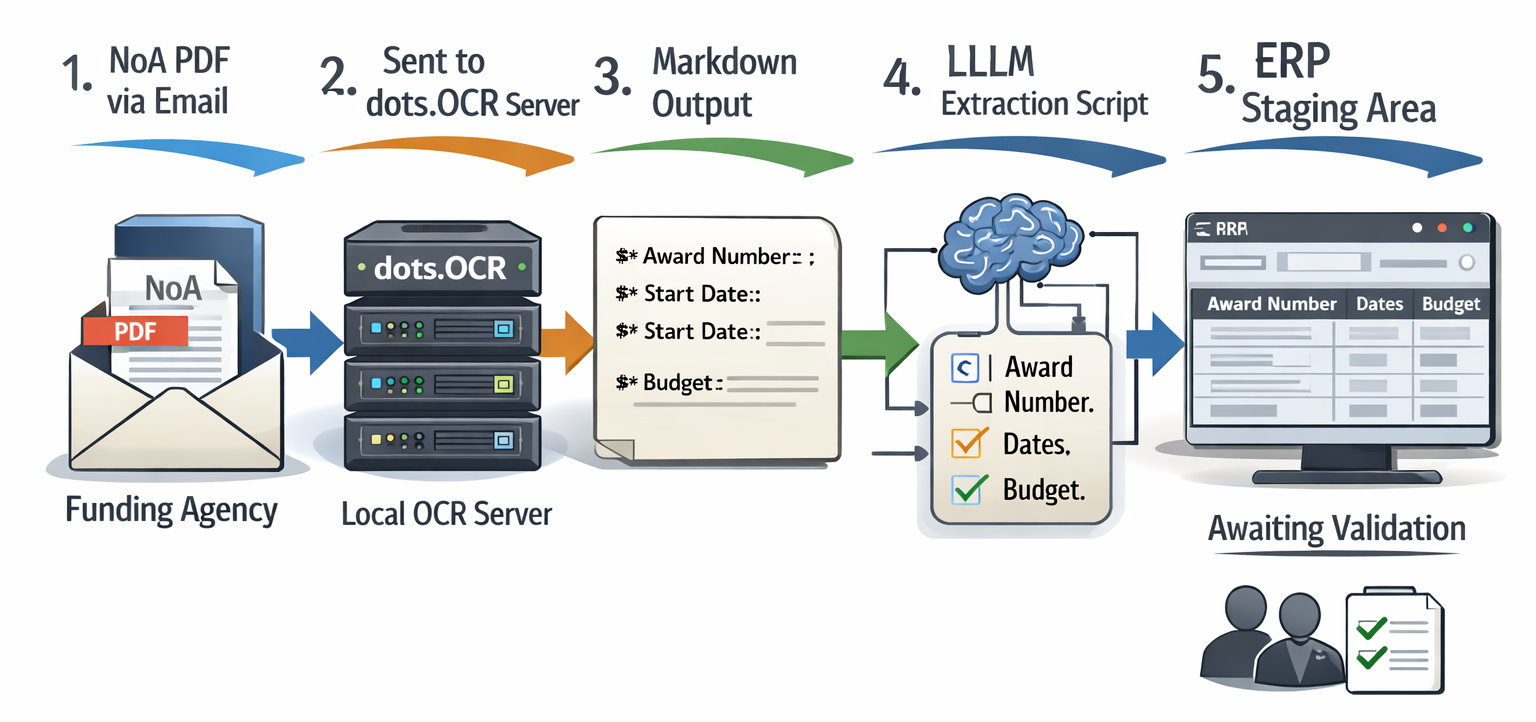

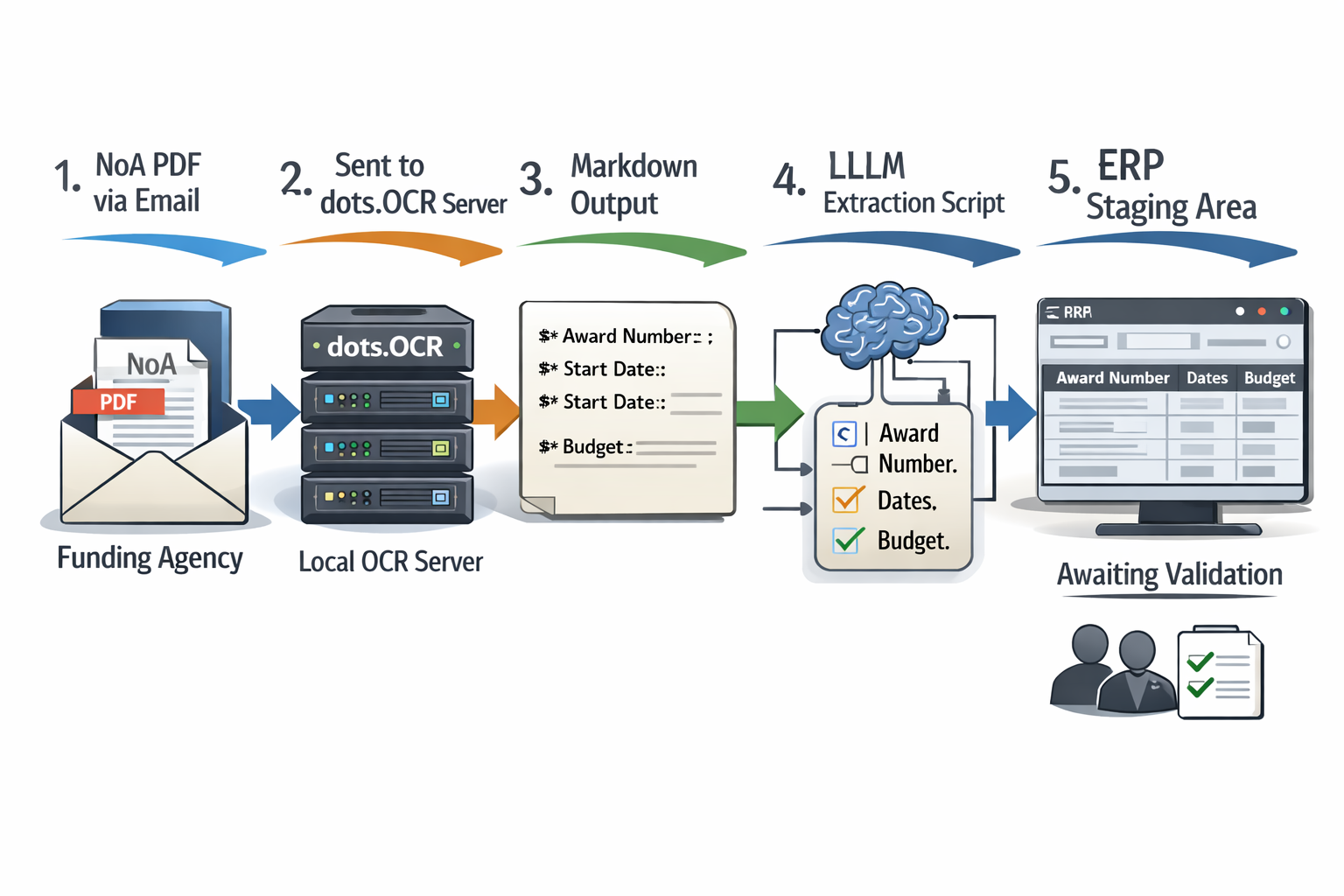

8. Implementation Guide for Research Administrators

Putting dots.OCR to work does not require a supercomputer — a standard workstation with a modern graphics card is sufficient, and it can even run on a recent Mac laptop. The University of Idaho’s AI4RA team has developed a free, open-source tool called dots_ocr_api that makes it easy to submit a PDF and receive back structured, machine-readable text. A typical workflow would have the system automatically receive a grant award PDF, pull out the key data fields, and stage them for staff to review and approve before anything is entered into the university’s financial system. This human review step is important because even the best AI is not perfect — especially on very low-quality scans or handwritten notes in the margins. A tool called Vandalizer, currently in development, aims to make these workflows even simpler, requiring no technical knowledge from the user.

9. Conclusion

The burden of manually re-entering grant data from PDF documents is a hidden cost that slows down research and creates compliance risks across university research offices. The good news is that modern AI-powered OCR technology has finally matured to the point where it can reliably read even the most complex federal grant documents. dots.OCR in particular represents a major step forward, offering the accuracy of expensive cloud services in a package that can run securely on campus without outside data sharing. By automating the most tedious parts of grant setup, research administrators can spend more time on meaningful work — supporting faculty researchers and managing compliance risk. The digital future of research administration is here, and it fits on a standard office workstation.